How the news breaks

I swear, sometimes this programming thing is really just the digital equivalent of baling twine and duct tape.

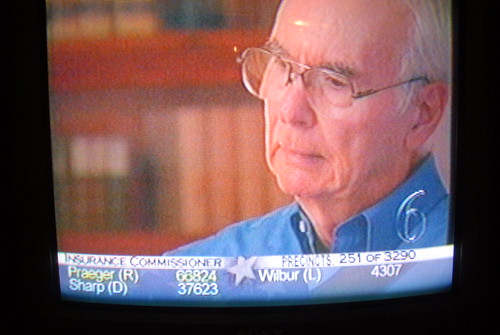

If you happen to be watching 6News in Lawrence last night, you’d have seen the election results crawling across the bottom of the screen:

Pretty much par for the course in terms of local TV coverage… but do you have any idea how that information gets there?

Let me break it down:

Votes are collected by the precincts, who report the totals to the Secretary of State’s office. The Secretary of State publishes those results on a web page, but their IT department is paranoid, so only a single IP – that of the Journal-World’s corporate firewall – is given access to that page of results.

(It almost goes without saying that the HTML of this result page is grossly invalid.)

Our web servers, however, sit outside of the corporate firewall on a separate network, and so are unable to see that page.

So, a small script on a Linux box under Matt’s desk downloads the page of results every time it changes, and then turns around and uploads it to a production server.

Another small script (on our server this time) scrapes this shoddy HTML (using BeautifulSoup, of course) and inserts the data into our database. At this point the data shows up online, but the journey to the airwaves is far from complete.

At this point, a third script fetches the data back out of the database and writes an Excel spreadsheet (using pyExcelerator).

This spreadsheet is moved to a publicly-accessible URL.

Over at 6News, a Windows box sits and runs a batch file which, using a Windows binary of wget, downloads the Excel file.

Finally, the on-air graphics system reads this Excel file, and the data appears in the crawl.

If you’ve been keeping track, this process involves eight different machines:

- the Secretary of State’s vote machine,

- the Secretary of State’s web server,

- the Linux box under Matt’s desk,

- two of our web servers,

- our database server,

- the Windows box over at 6News, and

- the on-air graphics machine

and four glue scripts, in three different languages:

- the script that copies the results from the Secretary of State to our public server (shell),

- the data scraper (python),

- the Excel sheet writer (python)

- the Windows downloader (batch)

Here’s the kicker, though:

Despite – or because of – all of this, all night we had fresher data – often by 30 minutes or more – than any of our competition.

Baling twine and duct tape, man…